Analyzing Anthropic's...Analysis

The good, the bad, and the ugly of their labor market study

Anthropic released a research study in early March titled ‘Labor market impacts of AI: A new measure and early evidence.’ They explore what jobs are most at-risk of automation, and then whether exposed jobs have lower hiring rates.

How’d they do this? Anthropic looked at whether Claude usage (which was only released in 2023) correlates with changes in the BLS’ labor market predictions (which are only updated annually, and therefore only updated twice between the release of Claude and the release of this paper).

I’ve seen so many different takes on this paper, which is a testament to how divisive AI is. Some people read this paper, whose conclusions are basically “null effect,” and said AI was coming for our jobs. Some people read this paper and had the reaction that Anthropic was going for, which was: “Hey, it’s not that bad!”

My reaction?

WTF is this paper doing?

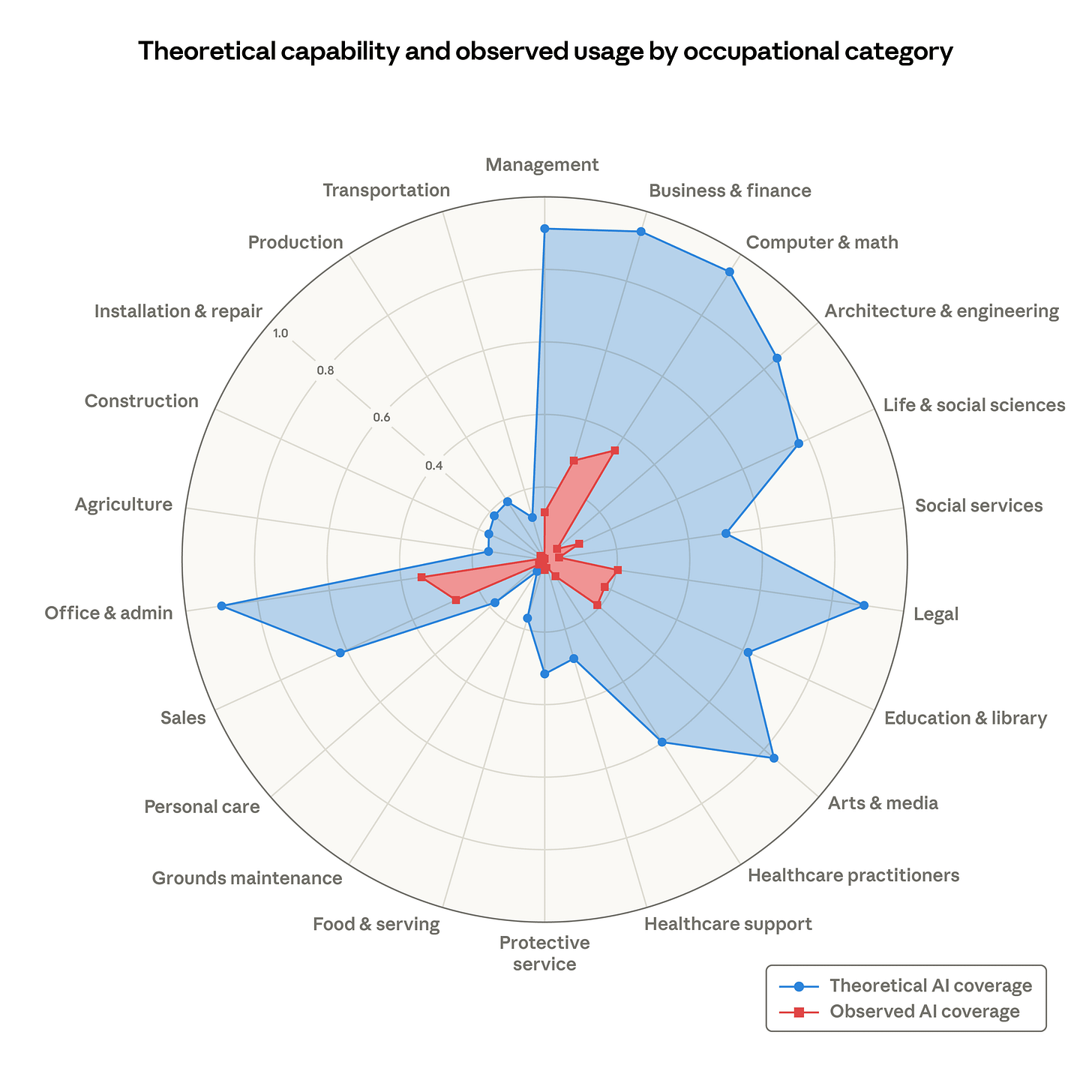

Anthropic’s findings are that the most penetrated professions (software engineering, sales & admin, legal, arts & media, etc.) haven’t seen a noticeable increase in unemployment rate changes than ‘no exposure’ roles, like agriculture, transportation, production, etc.

The problem is that the paper is stupid and clearly has an angle. Let’s dig into why this paper is so fundamentally flawed that it’s comical. We’re going to talk through why this analysis is flawed, why we’re upset about it, and what is interesting about it.

Part 1: What’s wrong with this analysis?

The first obvious flaw is that the authors use projections updated in August 2025. BLS projections assume “full employment” (source: BLS): E.g., they assume everyone who wants a job will find a job.

By their own admission, the BLS assumes gradual productivity changes and stable increases in employment. The BLS forecasts are also only updated annually, which, in the case of Anthropic’s paper, was August 2025.

That means that Anthropic basically looked at usage of a single tool (Claude), identified a few key exposed industries, and then said “In the 2–3 years that our product has been released, we do not see a difference in projected hiring patterns between exposed and non-exposed professions…that were projected before we launched our tool and revised just once.”

To reiterate:

Anthropic is not looking at actual employment data. They’re looking at projections that the BLS itself acknowledges is a bad data set to use when looking at short term, volatile time horizons.

Anthropic assumes that the BLS projections have taken into account the impact of AI changes in hiring, when they haven’t: These projections expressly are not suited to this kind of analysis.

They’re also looking at a tiny sliver of time–basically a 12 month time frame, given the frequency of BLS updates (even if they try to allude to a longer time horizon, the constraint is BLS projections).

They’re also not looking at the whole host of AI tools and usage, just Claude usage, to determine exposed industries.

They only look at BLS projections in the US, and therefore miss the tsunami hitting jobs we already offshored, like customer service or software engineering.

Part II: Why am I getting so hung up on this?

Look, I respect Anthropic. Of the large LLMs, I genuinely believe that they are the least opportunistic (which isn’t saying much when your competition is Sam Altman, but it must still be said).

But it’s precisely because Anthropic is trying to frame themselves like this that I am dubious of what they are saying, which is essentially: “You all think AI will take your jobs! But you shouldn’t be worried unless maybe you’re an entry-level employee in this one specific industry, and even then, our findings are insignificant.”

The idea being that we are no longer as worried about the negative macroeconomic effects of AI, and just strap in for the productivity gains!

I so desperately want to drink this Kool-Aid. It would make everything better. But we all feel it in our bones that these findings may be misleading, and of course they are! Anthropic put it out. This is like Big Tobacco publishing a study on the benefits of a pack-a-day.

Companies in 2026 have announced massive layoffs, attributing them to AI. The job market is more competitive than ever, and economists have warned that AI is taking jobs, like Dr. Erik Brynjolfsson (who Anthropic’s researchers cite) and Dr. Nela Richardson.

Part III: Surely some of this analysis is useful?

Yes. If you’re a regular reader, you’ll know that we are firm believers in “yes, and”. Yes, this analysis is a flawed piece of propaganda, but it also has learnings about careers that are exposed to AI. “Exposed” can mean that AI tools can complement the work, but, as Dr. Brynjolfsson finds, it can also decimate career ladders (particularly for young people at the bottom).

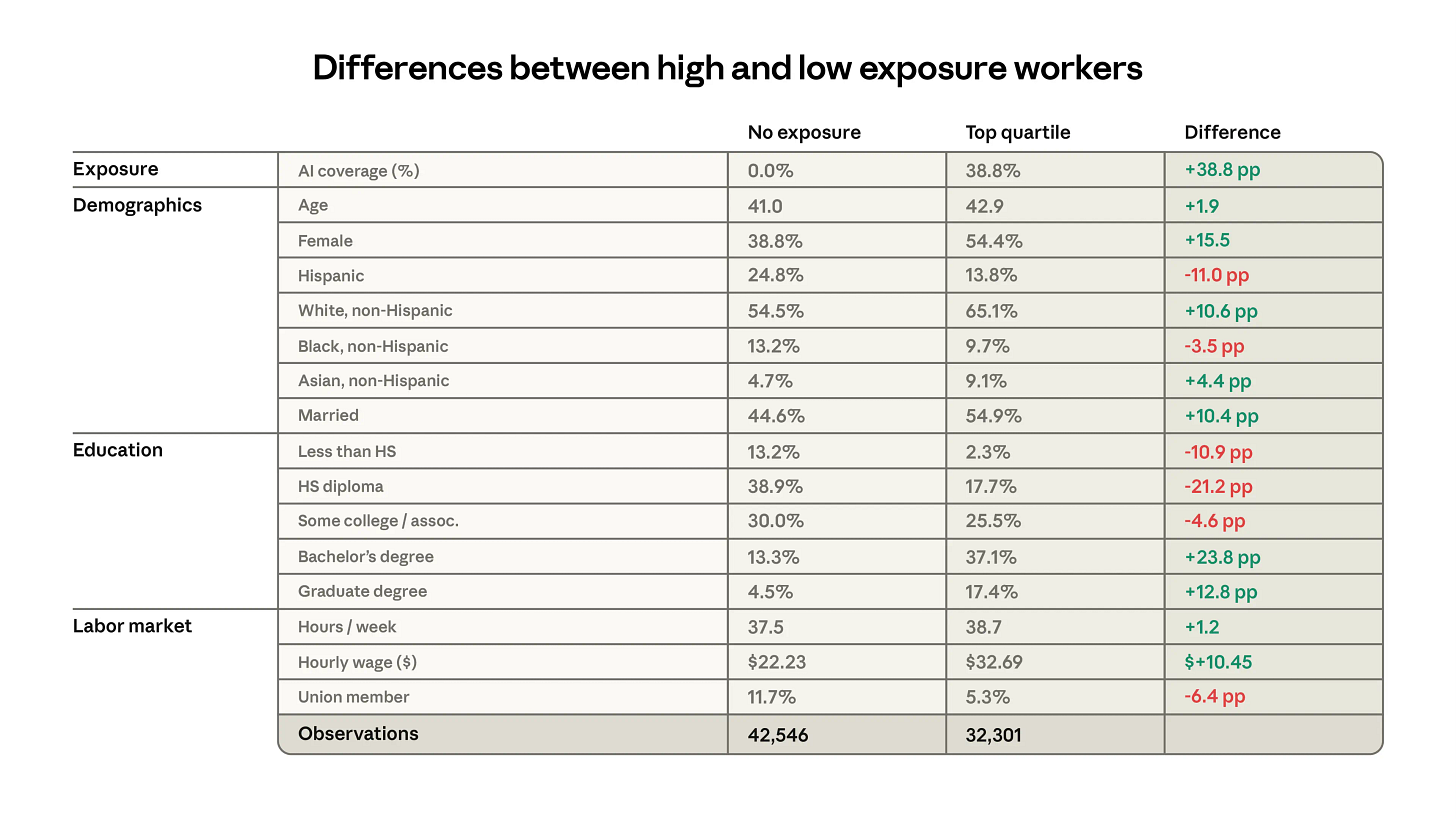

AI will primarily displace knowledge-economy office work. This is work that has been incredibly well-suited for women and has helped lead to generational reductions in the wage gap between college-educated white men and white women.

Women have a 15.5 percentage point higher representation in heavily exposed industries. White people have a 10.6 percentage point increase. College-educated people have a 23.8 percentage point gap between heavily exposed and non-exposed.

Other interesting learnings? Union members are protected! Largely because they over-index in trades, but still. Careers that require a high school diploma or less are protected. Basically, we all need to go into highly manual trades. Or at least, our kids will.

We wish this table was broken out more, but the data set wasn’t provided, and the Appendix had nothing on this demographic breakdown. Black people seem to be inured, but I’m sure if you look at this by gender, Black women will also be exposed to AI dislocation.

This is really the root of my worry, then. I talk about it a lot. AI will unleash productivity. But it will also, if hiring trends continue, destroy early career ladders and career ladders for women and minorities. Economists and policy planners in China are freaking out for similar reasons (even as the government has adopted goals around national “AI penetration”). Advisors in the Philippines and India are sounding the alarm on the eradication of BPO jobs.

I worry that the rosy picture Anthropic has prematurely painted deflects or minimizes the structural changes that are already under way and impossible to stop without acknowledgement and action.