AI Pricing is Confusing AF

AI feature utilization is destroying traditional pricing models. Let's learn from old models.

This weird thing is happening where nobody has any idea how to price AI, despite its ubiquitous use. Most companies, especially the SaaS platforms trying to incorporate AI, are used to subscription services.

Subscription models are because they scale infinitely well, and because historically, the marginal cost of production of new features decreases, letting companies capture more revenue per user as they expand.

That’s not the case with AI, where the marginal costs of production don’t net out. In fact, your most active users can become exponentially more expensive. Kyle Poyar, at his Growth Unhinged substack, estimated that about 70% of AI native startups were still using a per user subscription model. Most of the other 30% offer some hybrid model, where they have per user subscription models and limits on usage–which…isn’t much better (it might actually be worse from an end-user messaging perspective).

That limit on usage can manifest in a few ways: As a limit on time (which is a proxy for usage), or limits on usage.

There are so many problems with both of these approaches, but I especially take umbrage with the pay-per-use model (particularly Monday.com’s, below).

Surely this is fair?

Some FP&A manager is saying somewhere. “Paying for usage is actually the most fair,” they argue, pushing their glasses up the bridge of their nose. “Because the customer isn’t leaving money on the table. They’re only paying for consumption.”

“Plus,” they whisper conspiratorially, “we get to see the supply and demand curve perfectly.”

This argument is cogent: People only pay for what they consume, like gas! Traditional SaaS software has zero marginal cost of production, whereas AI scales costs. “Obviously we can’t treat pricing the same,” that finance manager argues.

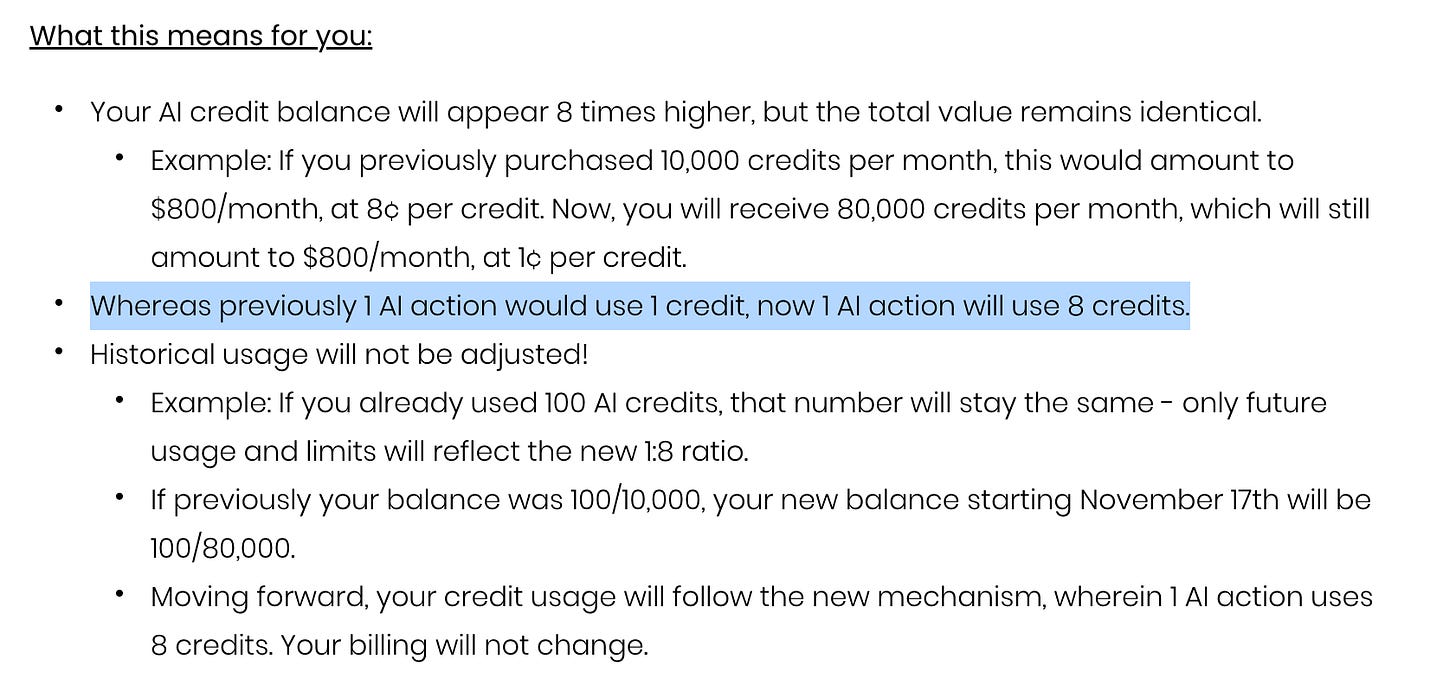

The problem is that as models change, or as the plumbing becomes cheaper to build between LLMs, price will fluctuate. Just like Monday.com’s changes to their AI pricing model below.

This creates a poor user experience for the end user, who now has to do back-of-the-napkin math to figure out (in the case of Monday.com) how to divide their previous credit consumption by 8 (or whatever factor of credits a company suddenly shifts to).

Optimizing for what?

Subscription services might leave money on the table for users, but they also provide predictable utility and stability.

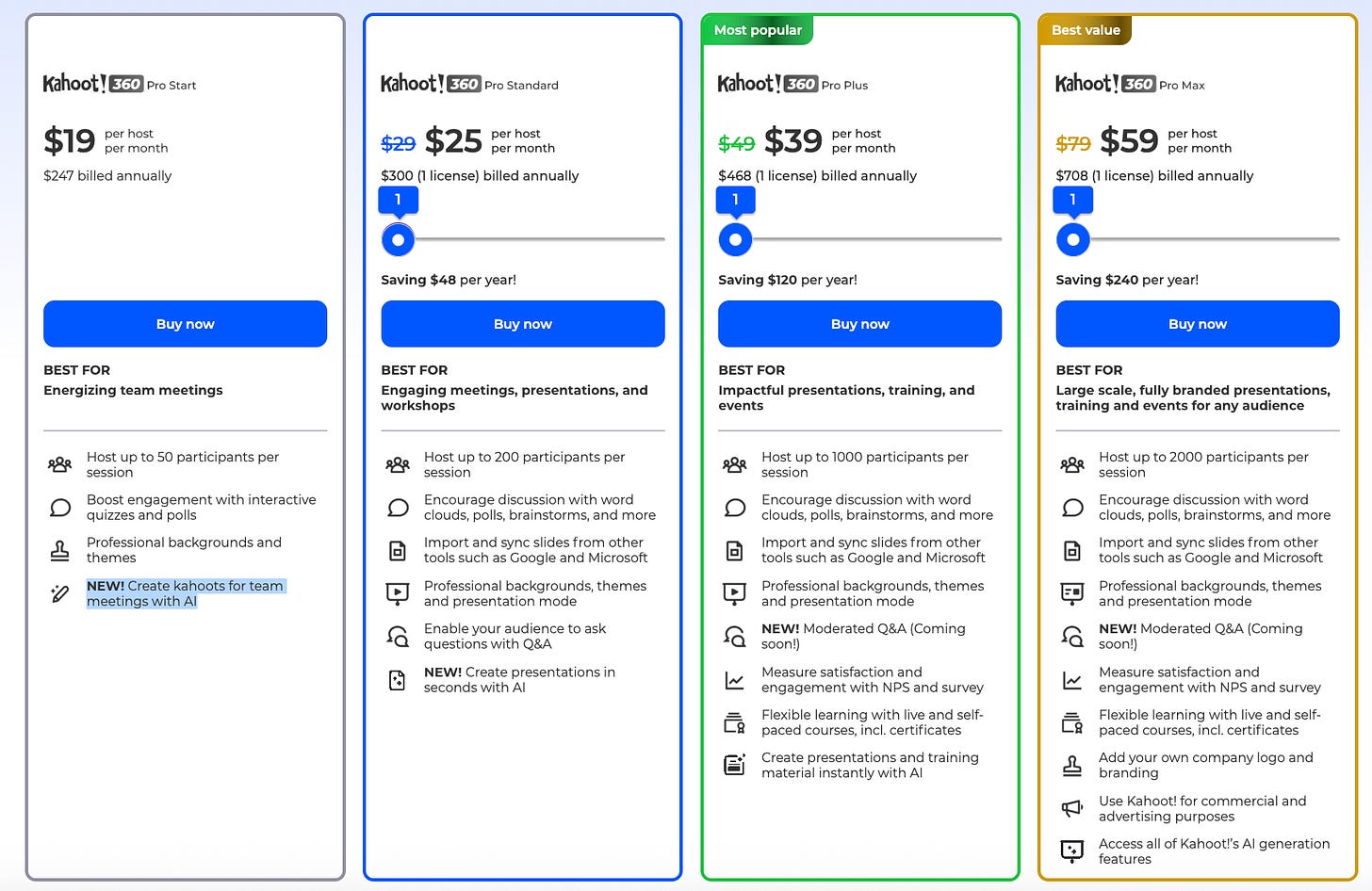

When designing a pricing strategy for AI, first companies should start with a cost-based approach. We’ve seen some players, even in EdTech, scale or gate their AI features (like Kahoot!, which previously had free features and then within a month paywalled them).

It’s clear that for legacy players who layer in AI features, they don’t know what the value-add for those features are–both in terms of acquisition and retention

I spent about 8 hours over the past month trying to build the easiest workflow with Zapier’s AI Copilot (which uses Claude). It was the most frustrating experience ever. Companies are plugging in AI features, but there’s no feedback loop: No ability for me to inform Zapier how sh*t their AI Copilot was, or tell Kahoot! and Wayground or Nearpod what I felt about their features. They probably just have high-level consumption reporting. I doubt they even know whether AI usage correlates with conversion or stickiness yet.

Which is why they need to buffer with a cost-basis approach baked into their subscription model. Instead of gating consumption, gate features. This is clearer to users what they can / can’t do. If I’m trying to build a UX in Figma, and suddenly find that I’ve reached my credit limit, I’ll be much more pissed off than if I can clearly see which AI features are blocked. And this lets you:

See the supply/demand curve for AI functionality much more clearly

Figure out how to price or gate AI features that might cost more

Who wins?

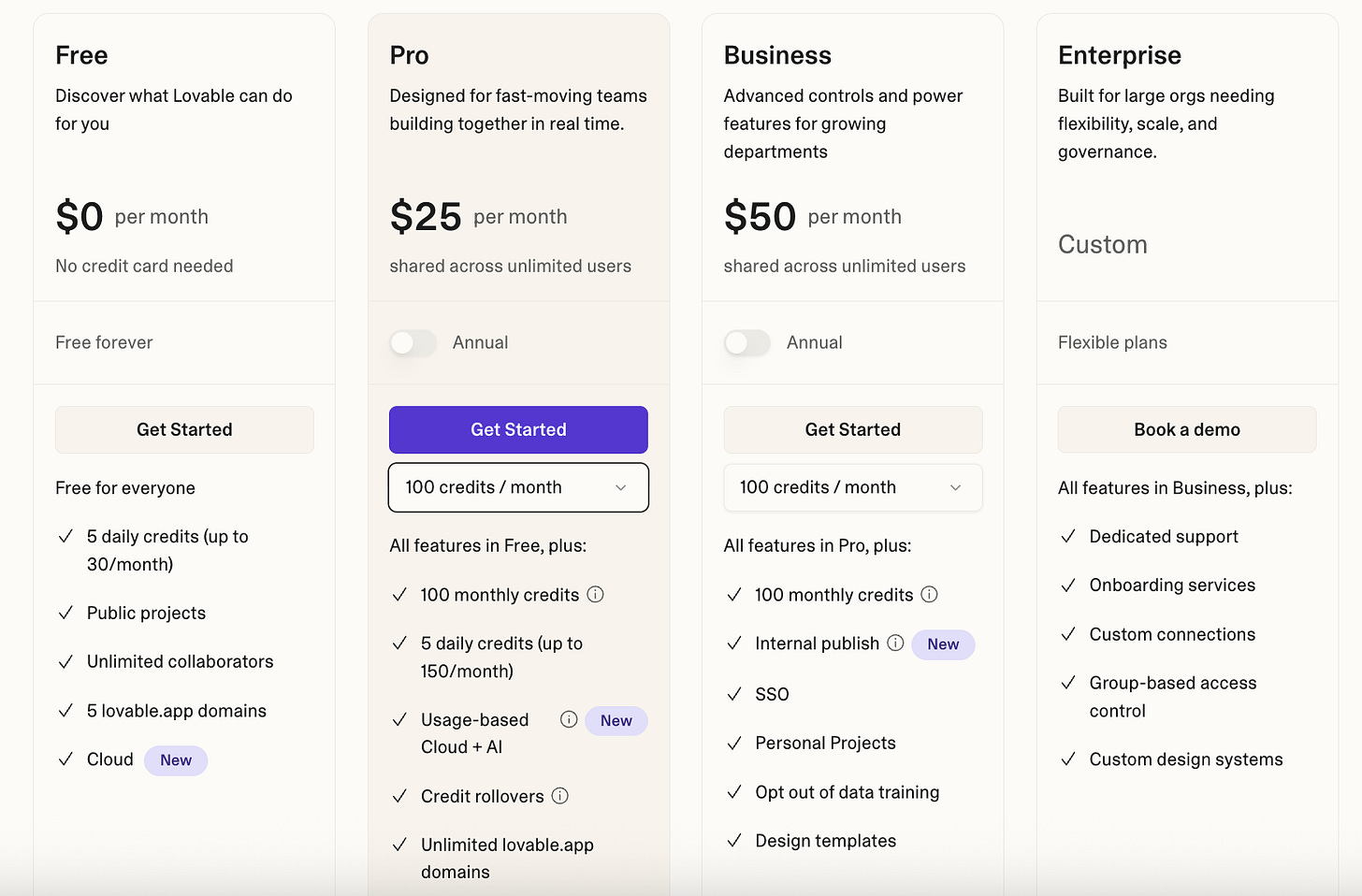

Elena Verna at Lovable, who I respect a TON, hates AI credit models and believes winners will be companies that can revert back to subscription models that have clarity and price stability (the irony is that Lovable has a credit model with limits).

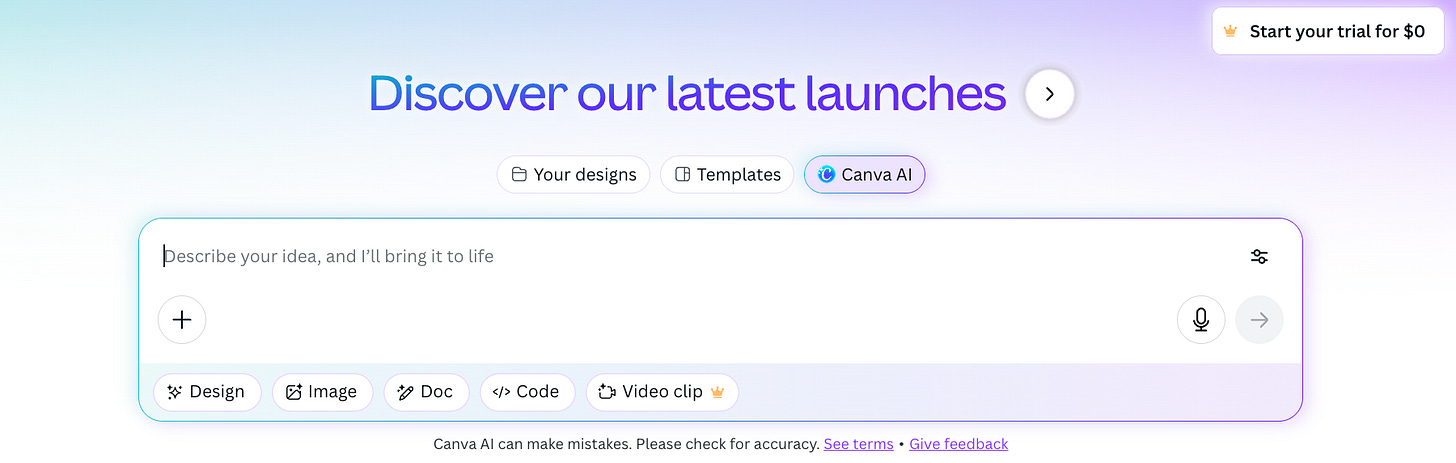

I agree with Elena. But the companies that are most able to revert to a sustainable subscription model are–ironically–legacy aka non-native AI companies. Think Canva, or even Zapier. They have core business models that already have high-value software with low-to-zero marginal costs of production. That means that they can subsidize their AI features (and scaling costs) until they figure out how to price them effectively, especially within a product that likely already has a defensible market positioning.

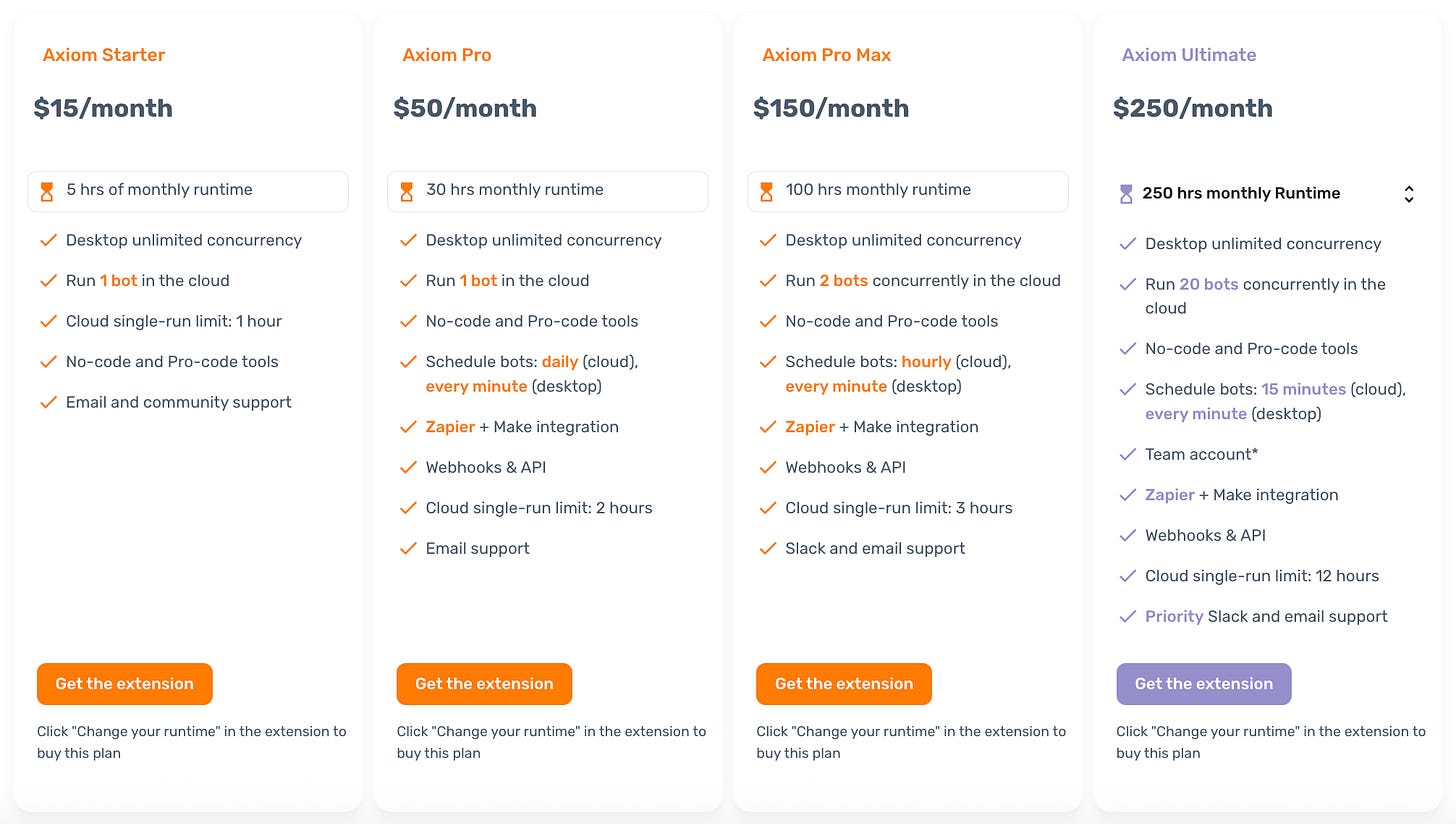

Canva, Zapier, and HubSpot can figure out the pricing for their AI features because they’re generating cashflow. Axiom.AI? MagicSchool? I’m less confident. Commodification by wrappers is already an existential threat, especially because the LLMs themselves are getting much better (why go to MagicSchool when you can use Gemini at the source–especially if it’s cheaper at an enterprise level by scrapping the middle man). Burn rate is astronomically high while these AI native startups chase acquisition and figure out conversion. Everyone is waiting to see if Nvidia GPUs last as long as they say they do. Almost all of these large mega-caps are using these GPUs as collateral based on a 6-year lifespan, but if that’s even a year too optimistic, then the unit economics get destroyed again. And at some point, the subsidies will run out. Data centers get built faster than the energy infrastructure needed to power them, which further complicates pricing strategies. The list goes on.

Suddenly AI-native companies will have to impose different credit consumption limits or different price-per-generation calculations. Lovable is able to keep costs down because they’re able to optimize the plumbing that gets your instructions to the LLMs, but there’s only so much of that within the locus of control.

It’s ironic that with a technology as future-forward as AI, I’m reminded of the adage that rolled out with another world-shaking technology: Mobile phones. We can learn a lot about the types of pricing that worked. Laggards would charge by the minute. The winners?

“No hidden fees.”