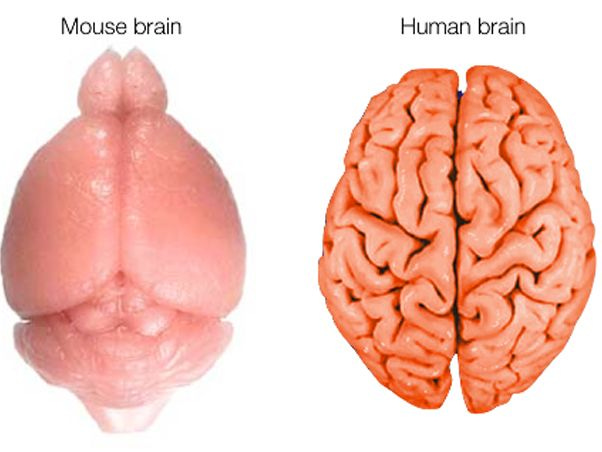

AI Makes Smooth Brains

The Catch 22 is AI is great to use if your brain is NOT smooth!

The end goal that many technologists espouse is that AI will lead to agents capable of working and thinking better than most humans, thereby making working and thinking irrelevant for the vast majority of humans.

On the way, it might lead to Skynet. And in the short term, it might lead to a bubble which may or may not be bigger than the Dot Com pop.

Understandably, we’re seeing a growing backlash against AI. I myself often question whether the societal benefits are worth the risks, which is just good due diligence, even for the enthusiasts.

What makes EdTech good vs. bad? What makes some AI good, and some AI bad?

It’s all about the outcomes we expect. Unfortunately, many AI tools do one thing really well: They smooth the path from input to output, removing all of the aspects that make us good learners and okay humans. No more productive struggle, no more critical thinking, no more failures.

Just one, optimized path, devoid of roadblocks or snags around which our brain can grow.

A smooth (brain) path.

Garbage Out, Garbage In

It’s not hard to find exemplars of technology that transformed learning. When the first chalkboard was put up in 1801 in Edinburgh, it was a massive improvement over individual chalk slates. Those led to dry erase boards, which are integral to the Building Thinking Classrooms, as well as smart boards, which changed my own teaching practice considerably.

The problem isn’t that we’re incapable of developing good technology. The problem is that when we try to control for certain outcomes, we start to control for specific inputs. And we are controlling for those smooth brain outcomes.

At a certain point, we went from asking students to think critically to performing like automatons, because it is easy to measure the performance of automatons: Yes / No. Correct or incorrect.

And so we start teaching to tests that are reductive. I did it. Every teacher has done it, especially in a high stakes testing environment. I need my kids to perform well on this summative assessment that we have collectively accepted and decided will dictate their lives (the Regents Exam, SATs, etc.). And because that is the outcome I am aiming for, my inputs will be things that help them get those questions correct, not to think critically. This predates AI, and it means that the ground that we deployed AI into was fertile for smooth brain thinking.

Take the SAT, for example, which is the ultimate outcome we are controlling for in K–12 education. For a whole host of shitty reasons, the long arc of a student’s academic history gets distilled into this perverse testing environment.

Several years ago, Dr. Les Perelman from MIT found that the SAT graders don’t even really score the SAT essay portion critically: They score it based on length. If you have an intro, three supporting paragraphs, and a conclusion, you get a good grade, even if it’s filled with factual inconsistencies and errors.

I realized I could score [students’ SAT essays] before I read it because just a certain length was always a certain score. So being from MIT, where numbers are very important, I counted the words, put the number of words and the scores into an Excel spreadsheet and discovered that the correlation was the highest I've ever seen in test data. - Dr. Les Perelman, MIT

Want to know what else can write really structured, perfectly formulated essays filled with inconsistencies? You guessed it.

We basically created an environment where the outcomes we test kids and adults for are perfectly interchangeable with this new product that can achieve those same outcomes.

The Worst EdTech Tools

And so the worst tools we develop are ones that smooth the path to those misguided outcomes. A student’s grade point average in college becomes indicative of what job they’ll get, so students take the easiest classes possible, or they use SparkNotes or Chegg (and now Google Gemini or ChatGPT) to cheat.

What other tools suck for learning aside from Chegg? ChatGPT and Google Gemini, despite what those companies’ marketing departments will tell you. I have seen so many students upload their homework to ChatGPT and have it tell them the answer. There is no productive struggle. No sharp corner upon which to cut your brain and build scar tissue.

We are literally building smooth brains!

And these companies are now giving it away for FREE or paying millions to influence adoption among key demographics whose brains are trying to actively grow ridges and folds. They’re paying campus ambassadors to promote their LLMs, many of whom hold student government positions. This is BAD! This is maybe as bad as letting gambling sites advertise on colleges!

A few weeks ago, Matthew Connelly, the vice dean for AI initiatives at Columbia University, wrote a guest piece in the NYT called, “AI Companies are Eating Higher Education.” He argues that the people who use AI the best are people with non-smooth brains (only in much better articulated terms).

But research suggests that students using A.I. do not read as carefully when doing research and that they write with diminished accuracy and originality. Students do not even realize what they are missing. But educators and employers know. Reading closely, thinking critically and writing with logic and evidence are precisely the skills people need to realize the bona fide potential of A.I. to support lifelong learning. - Matthew Connelly

This is the Catch 22. AI is a good tool if you have a non-smooth brain. But AI makes your brain smooth.

It is therefore imperative to highly control AI usage while your brain is still developing. Which we are not doing.

AI can be good. There are good tools out there that don’t make brains smooth! ROYO.ai, for example, generates decodable books for students based on their individual needs. This is a good use of AI for a number of reasons. It doesn’t do the thinking or heavy lifting for kids: It creates content specialized for kids. Also, decodable books aren’t meant to be written beautifully or illustrated wonderfully, and therefore risk to job cannibalization of creatives is low.

Other good uses of AI? Dan Meyer has pioneered a product at Amplify called Discussion Moments. You can read his blog below, but a teacher uses AI in the moment to synthesize and sort student responses, so that the teacher knows the perfect questions to ask to foster rich discourse.

This helps student thinking!

More brain folds, please! So the next time you are evaluating whether your child, school, company, should use AI, think about two things:

How developed are my constituents’ brains? Do they already have folds?

Will this AI tool or feature smooth their path? Or make them think critically?

If your constituents have brain folds, you’re okay smoothing their path.

If your constituents don’t have brain folds, you want them to struggle. You want the pointed edge of a problem to meet the smooth surface of their brain, and to create that fold.